MCP servers are programs that expose specific capabilities to AI applications through standardized protocol interfaces.

Common examples include file system servers for document access, database servers for data queries, GitHub servers for code management, Slack servers for team communication, and calendar servers for scheduling.

Servers provide functionality through three building blocks:

| Feature | Explanation | Examples | Who controls it |

|---|---|---|---|

| Tools | Functions that your LLM can actively call, and decides when to use them based on user requests. Tools can write to databases, call external APIs, modify files, or trigger other logic. | Search flights Send messages Create calendar events |

Model |

| Resources | Passive data sources that provide read-only access to information for context, such as file contents, database schemas, or API documentation. | Retrieve documents Access knowledge bases Read calendars |

Application |

| Prompts | Pre-built instruction templates that tell the model to work with specific tools and resources. | Plan a vacation Summarize my meetings Draft an email |

User |

We will use a hypothetical scenario to demonstrate the role of each of these features, and show how they can work together.

Tools enable AI models to perform actions. Each tool defines a specific operation with typed inputs and outputs. The model requests tool execution based on context.

Tools are schema-defined interfaces that LLMs can invoke. MCP uses JSON Schema for validation. Each tool performs a single operation with clearly defined inputs and outputs. Tools may require user consent prior to execution, helping to ensure users maintain control over actions taken by a model.

Protocol operations:

| Method | Purpose | Returns |

|---|---|---|

tools/list |

Discover available tools | Array of tool definitions with schemas |

tools/call |

Execute a specific tool | Tool execution result |

Example tool definition:

{

name: "searchFlights",

description: "Search for available flights",

inputSchema: {

type: "object",

properties: {

origin: { type: "string", description: "Departure city" },

destination: { type: "string", description: "Arrival city" },

date: { type: "string", format: "date", description: "Travel date" }

},

required: ["origin", "destination", "date"]

}

}Tools enable AI applications to perform actions on behalf of users. In a travel planning scenario, the AI application might use several tools to help book a vacation:

Flight Search

searchFlights(origin: "NYC", destination: "Barcelona", date: "2024-06-15")

Queries multiple airlines and returns structured flight options.

Calendar Blocking

createCalendarEvent(title: "Barcelona Trip", startDate: "2024-06-15", endDate: "2024-06-22")

Marks the travel dates in the user's calendar.

Email notification

sendEmail(to: "team@work.com", subject: "Out of Office", body: "...")

Sends an automated out-of-office message to colleagues.

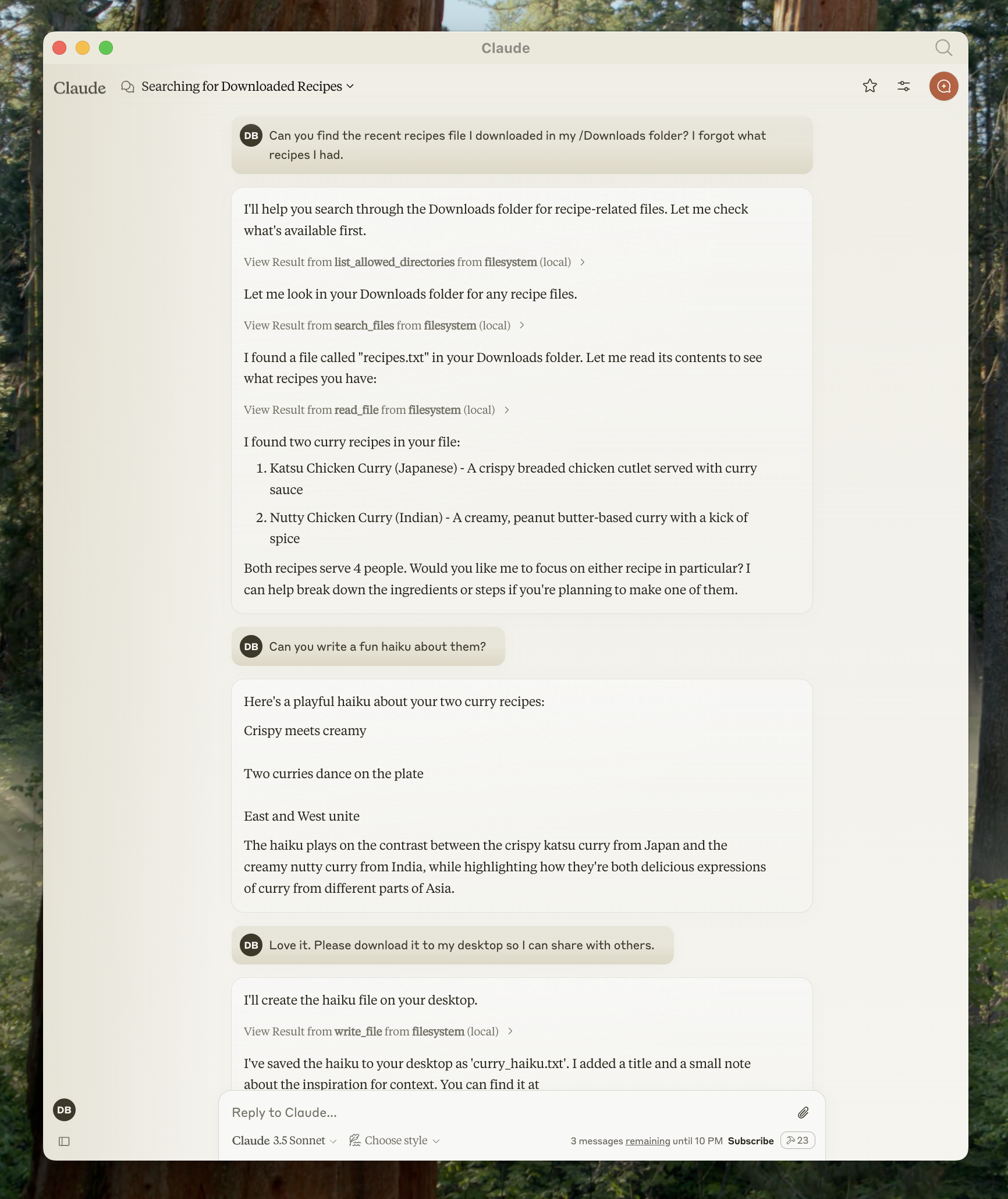

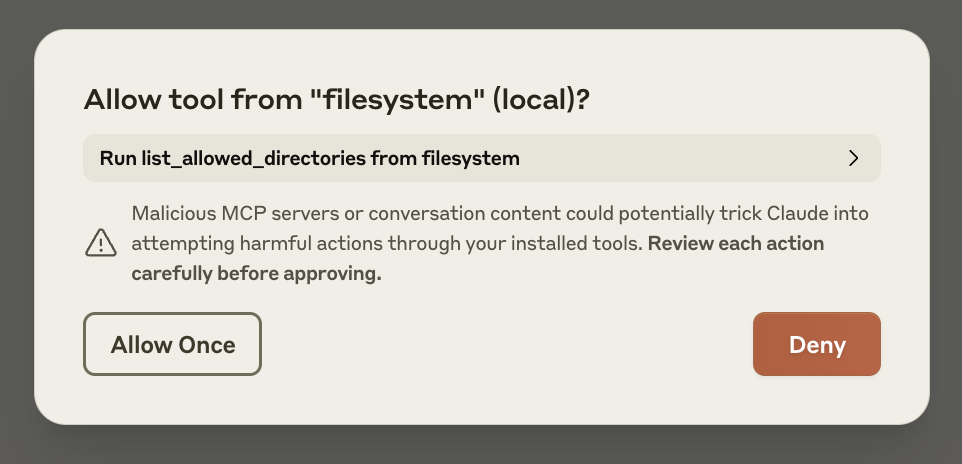

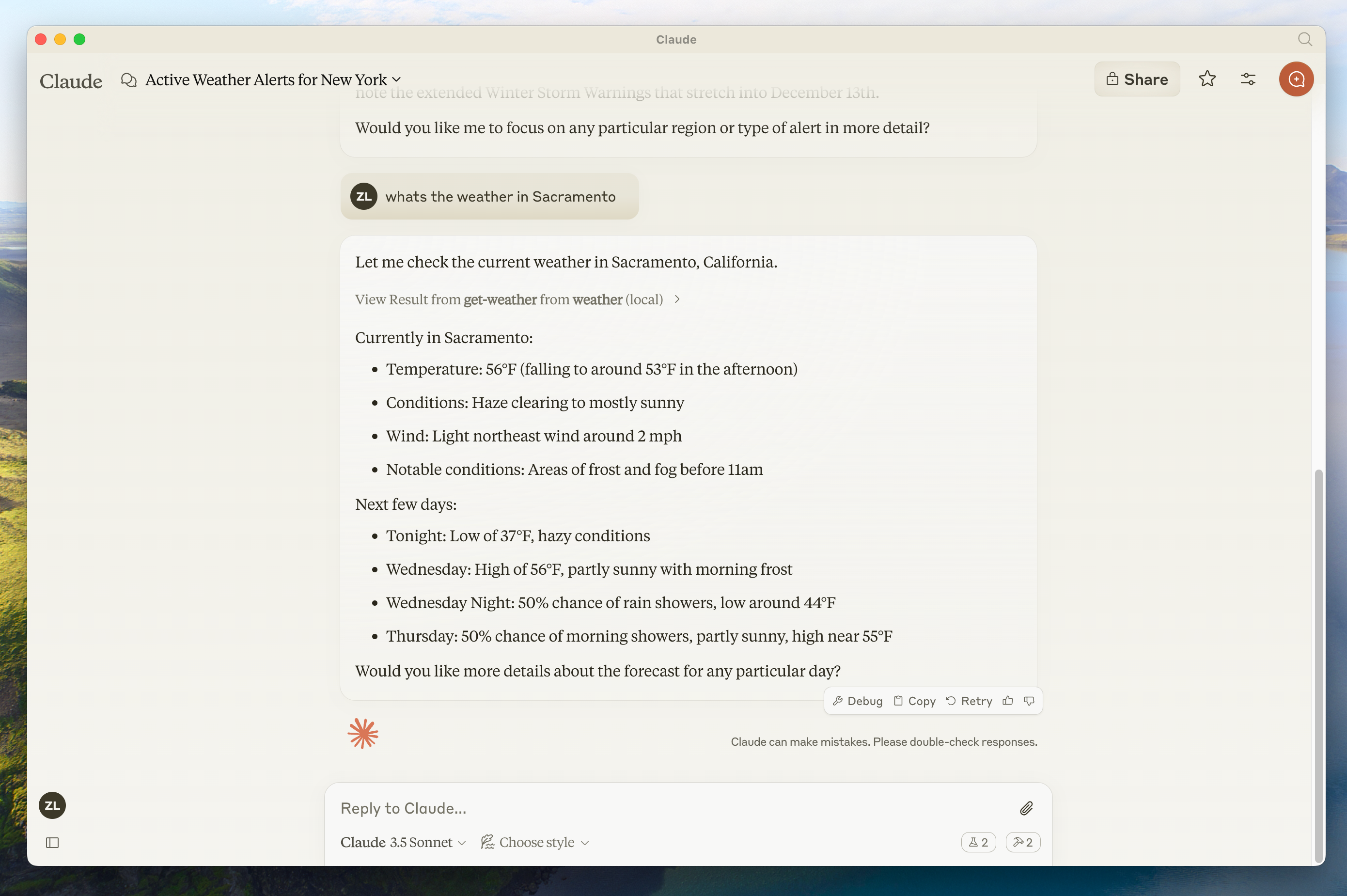

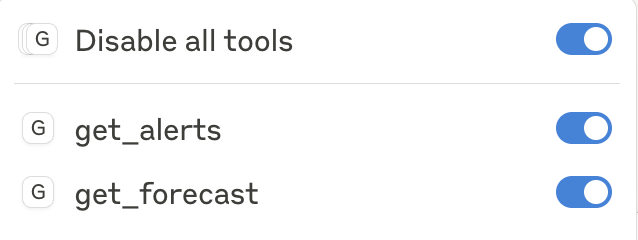

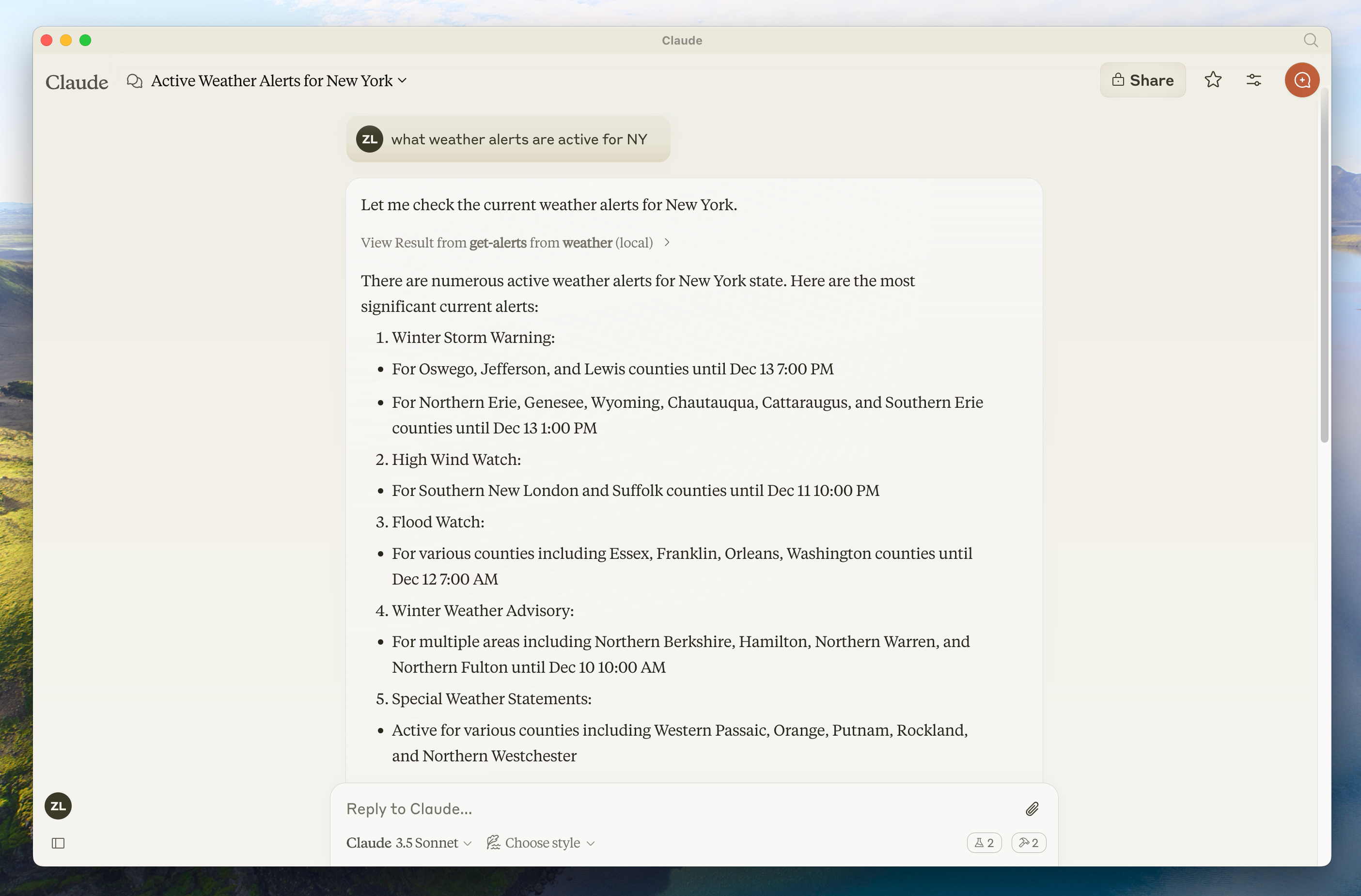

Tools are model-controlled, meaning AI models can discover and invoke them automatically. However, MCP emphasizes human oversight through several mechanisms.

For trust and safety, applications can implement user control through various mechanisms, such as:

- Displaying available tools in the UI, enabling users to define whether a tool should be made available in specific interactions

- Approval dialogs for individual tool executions

- Permission settings for pre-approving certain safe operations

- Activity logs that show all tool executions with their results

Resources provide structured access to information that the AI application can retrieve and provide to models as context.

Resources expose data from files, APIs, databases, or any other source that an AI needs to understand context. Applications can access this information directly and decide how to use it - whether that's selecting relevant portions, searching with embeddings, or passing it all to the model.

Each resource has a unique URI (like file:///path/to/document.md) and declares its MIME type for appropriate content handling. They declare MIME types for appropriate content handling and support two discovery patterns:

- Direct Resources - fixed URIs that point to specific data. Example:

calendar://events/2024- returns calendar availability for 2024 - Resource Templates - dynamic URIs with parameters for flexible queries. Example:

travel://activities/{city}/{category}- returns activities by city and categorytravel://activities/barcelona/museums- returns all museums in Barcelona

Resource Templates include metadata such as title, description, and expected MIME type, making them discoverable and self-documenting.

Protocol operations:

| Method | Purpose | Returns |

|---|---|---|

resources/list |

List available direct resources | Array of resource descriptors |

resources/templates/list |

Discover resource templates | Array of resource template definitions |

resources/read |

Retrieve resource contents | Resource data with metadata |

resources/subscribe |

Monitor resource changes | Subscription confirmation |

Continuing with the travel planning example, resources provide the AI application with access to relevant information:

- Calendar data (

calendar://events/2024) - Checks user availability - Travel documents (

file:///Documents/Travel/passport.pdf) - Accesses important documents - Previous itineraries (

trips://history/barcelona-2023) - References past trips and preferences

The AI application retrieves these resources and decides how to process them, whether selecting a subset of data using embeddings or keyword search, or passing raw data directly to the model.

In this case, it provides calendar data, weather information, and travel preferences to the model, enabling it to check availability, look up weather patterns, and reference past travel preferences.

Resource Template Examples:

{

"uriTemplate": "weather://forecast/{city}/{date}",

"name": "weather-forecast",

"title": "Weather Forecast",

"description": "Get weather forecast for any city and date",

"mimeType": "application/json"

}

{

"uriTemplate": "travel://flights/{origin}/{destination}",

"name": "flight-search",

"title": "Flight Search",

"description": "Search available flights between cities",

"mimeType": "application/json"

}These templates enable flexible queries. For weather data, users can access forecasts for any city/date combination. For flights, they can search routes between any two airports. When a user has input "NYC" as the origin airport and begins to input "Bar" as the destination airport, the system can suggest "Barcelona (BCN)" or "Barbados (BGI)".

Dynamic resources support parameter completion. For example:

- Typing "Par" as input for

weather://forecast/{city}might suggest "Paris" or "Park City" - Typing "JFK" for

flights://search/{airport}might suggest "JFK - John F. Kennedy International"

The system helps discover valid values without requiring exact format knowledge.

Resources are application-driven, giving them flexibility in how they retrieve, process, and present available context. Common interaction patterns include:

- Tree or list views for browsing resources in familiar folder-like structures

- Search and filter interfaces for finding specific resources

- Automatic context inclusion or smart suggestions based on heuristics or AI selection

- Manual or bulk selection interfaces for including single or multiple resources

Applications are free to implement resource discovery through any interface pattern that suits their needs. The protocol doesn't mandate specific UI patterns, allowing for resource pickers with preview capabilities, smart suggestions based on current conversation context, bulk selection for including multiple resources, or integration with existing file browsers and data explorers.

Prompts provide reusable templates. They allow MCP server authors to provide parameterized prompts for a domain, or showcase how to best use the MCP server.

Prompts are structured templates that define expected inputs and interaction patterns. They are user-controlled, requiring explicit invocation rather than automatic triggering. Prompts can be context-aware, referencing available resources and tools to create comprehensive workflows. Similar to resources, prompts support parameter completion to help users discover valid argument values.

Protocol operations:

| Method | Purpose | Returns |

|---|---|---|

prompts/list |

Discover available prompts | Array of prompt descriptors |

prompts/get |

Retrieve prompt details | Full prompt definition with arguments |

Prompts provide structured templates for common tasks. In the travel planning context:

"Plan a vacation" prompt:

{

"name": "plan-vacation",

"title": "Plan a vacation",

"description": "Guide through vacation planning process",

"arguments": [

{ "name": "destination", "type": "string", "required": true },

{ "name": "duration", "type": "number", "description": "days" },

{ "name": "budget", "type": "number", "required": false },

{ "name": "interests", "type": "array", "items": { "type": "string" } }

]

}Rather than unstructured natural language input, the prompt system enables:

- Selection of the "Plan a vacation" template

- Structured input: Barcelona, 7 days, $3000, ["beaches", "architecture", "food"]

- Consistent workflow execution based on the template

Prompts are user-controlled, requiring explicit invocation. The protocol gives implementers freedom to design interfaces that feel natural within their application. Key principles include:

- Easy discovery of available prompts

- Clear descriptions of what each prompt does

- Natural argument input with validation

- Transparent display of the prompt's underlying template

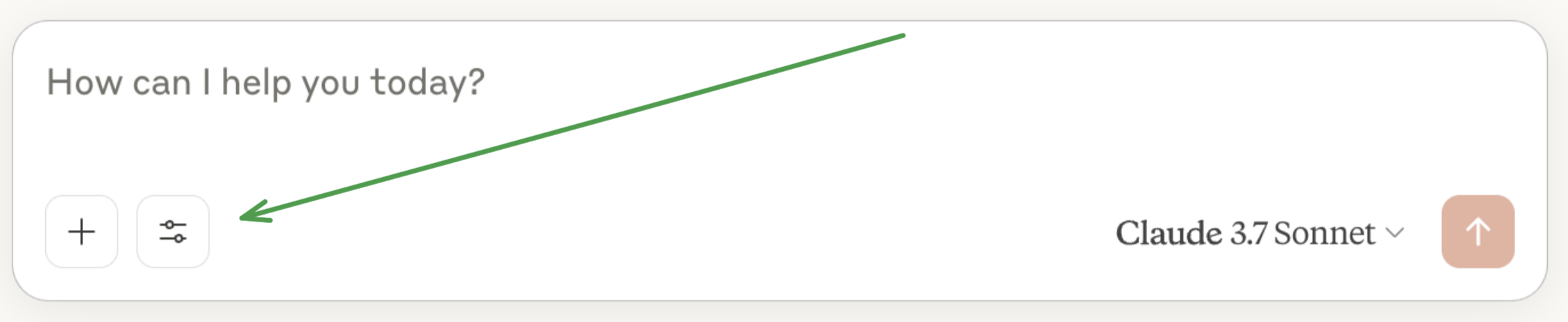

Applications typically expose prompts through various UI patterns such as:

- Slash commands (typing "/" to see available prompts like /plan-vacation)

- Command palettes for searchable access

- Dedicated UI buttons for frequently used prompts

- Context menus that suggest relevant prompts

MCP TypeScript SDK NPM Version MIT licensed Table of Contents Overview Installation Quickstart What is MCP? Core Concepts Server Resources Tools Prompts Completions Sampling Running Your Server stdio Streamable HTTP Testing and Debugging Examples Echo Server SQLite Explorer Advanced Usage Dynamic Servers Low-Level Server Writing MCP Clients Proxy Authorization Requests Upstream Backwards Compatibility Documentation Contributing License Overview The Model Context Protocol allows applications to provide context for LLMs in a standardized way, separating the concerns of providing context from the actual LLM interaction. This TypeScript SDK implements the full MCP specification, making it easy to:

Create MCP servers that expose resources, prompts and tools Build MCP clients that can connect to any MCP server Use standard transports like stdio and Streamable HTTP Installation npm install @modelcontextprotocol/sdk Quick Start Let's create a simple MCP server that exposes a calculator tool and some data. Save the following as server.ts:

import { McpServer, ResourceTemplate } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import express from 'express'; import { z } from 'zod';

// Create an MCP server const server = new McpServer({ name: 'demo-server', version: '1.0.0' });

// Add an addition tool server.registerTool( 'add', { title: 'Addition Tool', description: 'Add two numbers', inputSchema: { a: z.number(), b: z.number() }, outputSchema: { result: z.number() } }, async ({ a, b }) => { const output = { result: a + b }; return { content: [{ type: 'text', text: JSON.stringify(output) }], structuredContent: output }; } );

// Add a dynamic greeting resource

server.registerResource(

'greeting',

new ResourceTemplate('greeting://{name}', { list: undefined }),

{

title: 'Greeting Resource', // Display name for UI

description: 'Dynamic greeting generator'

},

async (uri, { name }) => ({

contents: [

{

uri: uri.href,

text: Hello, ${name}!

}

]

})

);

// Set up Express and HTTP transport const app = express(); app.use(express.json());

app.post('/mcp', async (req, res) => { // Create a new transport for each request to prevent request ID collisions const transport = new StreamableHTTPServerTransport({ sessionIdGenerator: undefined, enableJsonResponse: true });

res.on('close', () => {

transport.close();

});

await server.connect(transport);

await transport.handleRequest(req, res, req.body);

});

const port = parseInt(process.env.PORT || '3000');

app.listen(port, () => {

console.log(Demo MCP Server running on http://localhost:${port}/mcp);

}).on('error', error => {

console.error('Server error:', error);

process.exit(1);

});

Install the deps with npm install @modelcontextprotocol/sdk express zod@3, and run with npx -y tsx server.ts.

You can connect to it using any MCP client that supports streamable http, such as:

MCP Inspector: npx @modelcontextprotocol/inspector and connect to the streamable HTTP URL http://localhost:3000/mcp Claude Code: claude mcp add --transport http my-server http://localhost:3000/mcp VS Code: code --add-mcp "{"name":"my-server","type":"http","url":"http://localhost:3000/mcp\"}" Cursor: Click this deeplink Then try asking your agent to add two numbers using its new tool!

Core Concepts Server The McpServer is your core interface to the MCP protocol. It handles connection management, protocol compliance, and message routing:

const server = new McpServer({ name: 'my-app', version: '1.0.0' }); Tools Tools let LLMs take actions through your server. Tools can perform computation, fetch data and have side effects. Tools should be designed to be model-controlled - i.e. AI models will decide which tools to call, and the arguments.

// Simple tool with parameters server.registerTool( 'calculate-bmi', { title: 'BMI Calculator', description: 'Calculate Body Mass Index', inputSchema: { weightKg: z.number(), heightM: z.number() }, outputSchema: { bmi: z.number() } }, async ({ weightKg, heightM }) => { const output = { bmi: weightKg / (heightM * heightM) }; return { content: [ { type: 'text', text: JSON.stringify(output) } ], structuredContent: output }; } );

// Async tool with external API call

server.registerTool(

'fetch-weather',

{

title: 'Weather Fetcher',

description: 'Get weather data for a city',

inputSchema: { city: z.string() },

outputSchema: { temperature: z.number(), conditions: z.string() }

},

async ({ city }) => {

const response = await fetch(https://api.weather.com/${city});

const data = await response.json();

const output = { temperature: data.temp, conditions: data.conditions };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

// Tool that returns ResourceLinks server.registerTool( 'list-files', { title: 'List Files', description: 'List project files', inputSchema: { pattern: z.string() }, outputSchema: { count: z.number(), files: z.array(z.object({ name: z.string(), uri: z.string() })) } }, async ({ pattern }) => { const output = { count: 2, files: [ { name: 'README.md', uri: 'file:///project/README.md' }, { name: 'index.ts', uri: 'file:///project/src/index.ts' } ] }; return { content: [ { type: 'text', text: JSON.stringify(output) }, // ResourceLinks let tools return references without file content { type: 'resource_link', uri: 'file:///project/README.md', name: 'README.md', mimeType: 'text/markdown', description: 'A README file' }, { type: 'resource_link', uri: 'file:///project/src/index.ts', name: 'index.ts', mimeType: 'text/typescript', description: 'An index file' } ], structuredContent: output }; } ); ResourceLinks Tools can return ResourceLink objects to reference resources without embedding their full content. This can be helpful for performance when dealing with large files or many resources - clients can then selectively read only the resources they need using the provided URIs.

Resources Resources can also expose data to LLMs, but unlike tools shouldn't perform significant computation or have side effects.

Resources are designed to be used in an application-driven way, meaning MCP client applications can decide how to expose them. For example, a client could expose a resource picker to the human, or could expose them to the model directly.

// Static resource server.registerResource( 'config', 'config://app', { title: 'Application Config', description: 'Application configuration data', mimeType: 'text/plain' }, async uri => ({ contents: [ { uri: uri.href, text: 'App configuration here' } ] }) );

// Dynamic resource with parameters

server.registerResource(

'user-profile',

new ResourceTemplate('users://{userId}/profile', { list: undefined }),

{

title: 'User Profile',

description: 'User profile information'

},

async (uri, { userId }) => ({

contents: [

{

uri: uri.href,

text: Profile data for user ${userId}

}

]

})

);

// Resource with context-aware completion

server.registerResource(

'repository',

new ResourceTemplate('github://repos/{owner}/{repo}', {

list: undefined,

complete: {

// Provide intelligent completions based on previously resolved parameters

repo: (value, context) => {

if (context?.arguments?.['owner'] === 'org1') {

return ['project1', 'project2', 'project3'].filter(r => r.startsWith(value));

}

return ['default-repo'].filter(r => r.startsWith(value));

}

}

}),

{

title: 'GitHub Repository',

description: 'Repository information'

},

async (uri, { owner, repo }) => ({

contents: [

{

uri: uri.href,

text: Repository: ${owner}/${repo}

}

]

})

);

Prompts

Prompts are reusable templates that help humans prompt models to interact with your server. They're designed to be user-driven, and might appear as slash commands in a chat interface.

import { completable } from '@modelcontextprotocol/sdk/server/completable.js';

server.registerPrompt(

'review-code',

{

title: 'Code Review',

description: 'Review code for best practices and potential issues',

argsSchema: { code: z.string() }

},

({ code }) => ({

messages: [

{

role: 'user',

content: {

type: 'text',

text: Please review this code:\n\n${code}

}

}

]

})

);

// Prompt with context-aware completion

server.registerPrompt(

'team-greeting',

{

title: 'Team Greeting',

description: 'Generate a greeting for team members',

argsSchema: {

department: completable(z.string(), value => {

// Department suggestions

return ['engineering', 'sales', 'marketing', 'support'].filter(d => d.startsWith(value));

}),

name: completable(z.string(), (value, context) => {

// Name suggestions based on selected department

const department = context?.arguments?.['department'];

if (department === 'engineering') {

return ['Alice', 'Bob', 'Charlie'].filter(n => n.startsWith(value));

} else if (department === 'sales') {

return ['David', 'Eve', 'Frank'].filter(n => n.startsWith(value));

} else if (department === 'marketing') {

return ['Grace', 'Henry', 'Iris'].filter(n => n.startsWith(value));

}

return ['Guest'].filter(n => n.startsWith(value));

})

}

},

({ department, name }) => ({

messages: [

{

role: 'assistant',

content: {

type: 'text',

text: Hello ${name}, welcome to the ${department} team!

}

}

]

})

);

Completions

MCP supports argument completions to help users fill in prompt arguments and resource template parameters. See the examples above for resource completions and prompt completions.

Client Usage // Request completions for any argument const result = await client.complete({ ref: { type: 'ref/prompt', // or "ref/resource" name: 'example' // or uri: "template://..." }, argument: { name: 'argumentName', value: 'partial' // What the user has typed so far }, context: { // Optional: Include previously resolved arguments arguments: { previousArg: 'value' } } }); Display Names and Metadata All resources, tools, and prompts support an optional title field for better UI presentation. The title is used as a display name (e.g. 'Create a new issue'), while name remains the unique identifier (e.g. create_issue).

Note: The register* methods (registerTool, registerPrompt, registerResource) are the recommended approach for new code. The older methods (tool, prompt, resource) remain available for backwards compatibility.

Title Precedence for Tools For tools specifically, there are two ways to specify a title:

title field in the tool configuration annotations.title field (when using the older tool() method with annotations) The precedence order is: title → annotations.title → name

// Using registerTool (recommended) server.registerTool( 'my_tool', { title: 'My Tool', // This title takes precedence annotations: { title: 'Annotation Title' // This is ignored if title is set } }, handler );

// Using tool with annotations (older API) server.tool( 'my_tool', 'description', { title: 'Annotation Title' // This is used as title }, handler ); When building clients, use the provided utility to get the appropriate display name:

import { getDisplayName } from '@modelcontextprotocol/sdk/shared/metadataUtils.js';

// Automatically handles the precedence: title → annotations.title → name const displayName = getDisplayName(tool); Sampling MCP servers can request LLM completions from connected clients that support sampling.

import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import express from 'express'; import { z } from 'zod';

const mcpServer = new McpServer({ name: 'tools-with-sample-server', version: '1.0.0' });

// Tool that uses LLM sampling to summarize any text

mcpServer.registerTool(

'summarize',

{

title: 'Text Summarizer',

description: 'Summarize any text using an LLM',

inputSchema: {

text: z.string().describe('Text to summarize')

},

outputSchema: { summary: z.string() }

},

async ({ text }) => {

// Call the LLM through MCP sampling

const response = await mcpServer.server.createMessage({

messages: [

{

role: 'user',

content: {

type: 'text',

text: Please summarize the following text concisely:\n\n${text}

}

}

],

maxTokens: 500

});

const summary = response.content.type === 'text' ? response.content.text : 'Unable to generate summary';

const output = { summary };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

const app = express(); app.use(express.json());

app.post('/mcp', async (req, res) => { const transport = new StreamableHTTPServerTransport({ sessionIdGenerator: undefined, enableJsonResponse: true });

res.on('close', () => {

transport.close();

});

await mcpServer.connect(transport);

await transport.handleRequest(req, res, req.body);

});

const port = parseInt(process.env.PORT || '3000');

app.listen(port, () => {

console.log(MCP Server running on http://localhost:${port}/mcp);

}).on('error', error => {

console.error('Server error:', error);

process.exit(1);

});

Running Your Server

MCP servers in TypeScript need to be connected to a transport to communicate with clients. How you start the server depends on the choice of transport:

Streamable HTTP For remote servers, use the Streamable HTTP transport.

Without Session Management (Recommended) For most use cases where session management isn't needed:

import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import express from 'express'; import { z } from 'zod';

const app = express(); app.use(express.json());

// Create the MCP server once (can be reused across requests) const server = new McpServer({ name: 'example-server', version: '1.0.0' });

// Set up your tools, resources, and prompts

server.registerTool(

'echo',

{

title: 'Echo Tool',

description: 'Echoes back the provided message',

inputSchema: { message: z.string() },

outputSchema: { echo: z.string() }

},

async ({ message }) => {

const output = { echo: Tool echo: ${message} };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

app.post('/mcp', async (req, res) => { // In stateless mode, create a new transport for each request to prevent // request ID collisions. Different clients may use the same JSON-RPC request IDs, // which would cause responses to be routed to the wrong HTTP connections if // the transport state is shared.

try {

const transport = new StreamableHTTPServerTransport({

sessionIdGenerator: undefined,

enableJsonResponse: true

});

res.on('close', () => {

transport.close();

});

await server.connect(transport);

await transport.handleRequest(req, res, req.body);

} catch (error) {

console.error('Error handling MCP request:', error);

if (!res.headersSent) {

res.status(500).json({

jsonrpc: '2.0',

error: {

code: -32603,

message: 'Internal server error'

},

id: null

});

}

}

});

const port = parseInt(process.env.PORT || '3000');

app.listen(port, () => {

console.log(MCP Server running on http://localhost:${port}/mcp);

}).on('error', error => {

console.error('Server error:', error);

process.exit(1);

});

With Session Management

In some cases, servers need stateful sessions. This can be achieved by session management in the MCP protocol.

import express from 'express'; import { randomUUID } from 'node:crypto'; import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import { isInitializeRequest } from '@modelcontextprotocol/sdk/types.js';

const app = express(); app.use(express.json());

// Map to store transports by session ID const transports: { [sessionId: string]: StreamableHTTPServerTransport } = {};

// Handle POST requests for client-to-server communication app.post('/mcp', async (req, res) => { // Check for existing session ID const sessionId = req.headers['mcp-session-id'] as string | undefined; let transport: StreamableHTTPServerTransport;

if (sessionId && transports[sessionId]) {

// Reuse existing transport

transport = transports[sessionId];

} else if (!sessionId && isInitializeRequest(req.body)) {

// New initialization request

transport = new StreamableHTTPServerTransport({

sessionIdGenerator: () => randomUUID(),

onsessioninitialized: sessionId => {

// Store the transport by session ID

transports[sessionId] = transport;

}

// DNS rebinding protection is disabled by default for backwards compatibility. If you are running this server

// locally, make sure to set:

// enableDnsRebindingProtection: true,

// allowedHosts: ['127.0.0.1'],

});

// Clean up transport when closed

transport.onclose = () => {

if (transport.sessionId) {

delete transports[transport.sessionId];

}

};

const server = new McpServer({

name: 'example-server',

version: '1.0.0'

});

// ... set up server resources, tools, and prompts ...

// Connect to the MCP server

await server.connect(transport);

} else {

// Invalid request

res.status(400).json({

jsonrpc: '2.0',

error: {

code: -32000,

message: 'Bad Request: No valid session ID provided'

},

id: null

});

return;

}

// Handle the request

await transport.handleRequest(req, res, req.body);

});

// Reusable handler for GET and DELETE requests const handleSessionRequest = async (req: express.Request, res: express.Response) => { const sessionId = req.headers['mcp-session-id'] as string | undefined; if (!sessionId || !transports[sessionId]) { res.status(400).send('Invalid or missing session ID'); return; }

const transport = transports[sessionId];

await transport.handleRequest(req, res);

};

// Handle GET requests for server-to-client notifications via SSE app.get('/mcp', handleSessionRequest);

// Handle DELETE requests for session termination app.delete('/mcp', handleSessionRequest);

app.listen(3000); CORS Configuration for Browser-Based Clients If you'd like your server to be accessible by browser-based MCP clients, you'll need to configure CORS headers. The Mcp-Session-Id header must be exposed for browser clients to access it:

import cors from 'cors';

// Add CORS middleware before your MCP routes app.use( cors({ origin: '*', // Configure appropriately for production, for example: // origin: ['https://your-remote-domain.com', 'https://your-other-remote-domain.com'], exposedHeaders: ['Mcp-Session-Id'], allowedHeaders: ['Content-Type', 'mcp-session-id'] }) ); This configuration is necessary because:

The MCP streamable HTTP transport uses the Mcp-Session-Id header for session management Browsers restrict access to response headers unless explicitly exposed via CORS Without this configuration, browser-based clients won't be able to read the session ID from initialization responses DNS Rebinding Protection The Streamable HTTP transport includes DNS rebinding protection to prevent security vulnerabilities. By default, this protection is disabled for backwards compatibility.

Important: If you are running this server locally, enable DNS rebinding protection:

const transport = new StreamableHTTPServerTransport({ sessionIdGenerator: () => randomUUID(), enableDnsRebindingProtection: true,

allowedHosts: ['127.0.0.1', ...], allowedOrigins: ['https://yourdomain.com', 'https://www.yourdomain.com'] }); stdio For local integrations spawned by another process, you can use the stdio transport:

import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StdioServerTransport } from '@modelcontextprotocol/sdk/server/stdio.js';

const server = new McpServer({ name: 'example-server', version: '1.0.0' });

// ... set up server resources, tools, and prompts ...

const transport = new StdioServerTransport(); await server.connect(transport); Testing and Debugging To test your server, you can use the MCP Inspector. See its README for more information.

Examples Echo Server A simple server demonstrating resources, tools, and prompts:

import { McpServer, ResourceTemplate } from '@modelcontextprotocol/sdk/server/mcp.js'; import { z } from 'zod';

const server = new McpServer({ name: 'echo-server', version: '1.0.0' });

server.registerTool(

'echo',

{

title: 'Echo Tool',

description: 'Echoes back the provided message',

inputSchema: { message: z.string() },

outputSchema: { echo: z.string() }

},

async ({ message }) => {

const output = { echo: Tool echo: ${message} };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

server.registerResource(

'echo',

new ResourceTemplate('echo://{message}', { list: undefined }),

{

title: 'Echo Resource',

description: 'Echoes back messages as resources'

},

async (uri, { message }) => ({

contents: [

{

uri: uri.href,

text: Resource echo: ${message}

}

]

})

);

server.registerPrompt(

'echo',

{

title: 'Echo Prompt',

description: 'Creates a prompt to process a message',

argsSchema: { message: z.string() }

},

({ message }) => ({

messages: [

{

role: 'user',

content: {

type: 'text',

text: Please process this message: ${message}

}

}

]

})

);

SQLite Explorer

A more complex example showing database integration:

import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import sqlite3 from 'sqlite3'; import { promisify } from 'util'; import { z } from 'zod';

const server = new McpServer({ name: 'sqlite-explorer', version: '1.0.0' });

// Helper to create DB connection const getDb = () => { const db = new sqlite3.Database('database.db'); return { all: promisify<string, any[]>(db.all.bind(db)), close: promisify(db.close.bind(db)) }; };

server.registerResource( 'schema', 'schema://main', { title: 'Database Schema', description: 'SQLite database schema', mimeType: 'text/plain' }, async uri => { const db = getDb(); try { const tables = await db.all("SELECT sql FROM sqlite_master WHERE type='table'"); return { contents: [ { uri: uri.href, text: tables.map((t: { sql: string }) => t.sql).join('\n') } ] }; } finally { await db.close(); } } );

server.registerTool(

'query',

{

title: 'SQL Query',

description: 'Execute SQL queries on the database',

inputSchema: { sql: z.string() },

outputSchema: {

rows: z.array(z.record(z.any())),

rowCount: z.number()

}

},

async ({ sql }) => {

const db = getDb();

try {

const results = await db.all(sql);

const output = { rows: results, rowCount: results.length };

return {

content: [

{

type: 'text',

text: JSON.stringify(output, null, 2)

}

],

structuredContent: output

};

} catch (err: unknown) {

const error = err as Error;

return {

content: [

{

type: 'text',

text: Error: ${error.message}

}

],

isError: true

};

} finally {

await db.close();

}

}

);

Advanced Usage

Dynamic Servers

If you want to offer an initial set of tools/prompts/resources, but later add additional ones based on user action or external state change, you can add/update/remove them after the Server is connected. This will automatically emit the corresponding listChanged notifications:

import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import express from 'express'; import { z } from 'zod';

const server = new McpServer({ name: 'Dynamic Example', version: '1.0.0' });

const listMessageTool = server.registerTool( 'listMessages', { title: 'List Messages', description: 'List messages in a channel', inputSchema: { channel: z.string() }, outputSchema: { messages: z.array(z.string()) } }, async ({ channel }) => { const messages = await listMessages(channel); const output = { messages }; return { content: [{ type: 'text', text: JSON.stringify(output) }], structuredContent: output }; } );

const putMessageTool = server.registerTool(

'putMessage',

{

title: 'Put Message',

description: 'Send a message to a channel',

inputSchema: { channel: z.string(), message: z.string() },

outputSchema: { success: z.boolean() }

},

async ({ channel, message }) => {

await putMessage(channel, message);

const output = { success: true };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

// Until we upgrade auth, putMessage is disabled (won't show up in listTools)

putMessageTool.disable();

const upgradeAuthTool = server.registerTool(

'upgradeAuth',

{

title: 'Upgrade Authorization',

description: 'Upgrade user authorization level',

inputSchema: { permission: z.enum(['write', 'admin']) },

outputSchema: {

success: z.boolean(),

newPermission: z.string()

}

},

// Any mutations here will automatically emit listChanged notifications

async ({ permission }) => {

const { ok, err, previous } = await upgradeAuthAndStoreToken(permission);

if (!ok) {

return {

content: [{ type: 'text', text: Error: ${err} }],

isError: true

};

}

// If we previously had read-only access, 'putMessage' is now available

if (previous === 'read') {

putMessageTool.enable();

}

if (permission === 'write') {

// If we've just upgraded to 'write' permissions, we can still call 'upgradeAuth'

// but can only upgrade to 'admin'.

upgradeAuthTool.update({

paramsSchema: { permission: z.enum(['admin']) } // change validation rules

});

} else {

// If we're now an admin, we no longer have anywhere to upgrade to, so fully remove that tool

upgradeAuthTool.remove();

}

const output = { success: true, newPermission: permission };

return {

content: [{ type: 'text', text: JSON.stringify(output) }],

structuredContent: output

};

}

);

// Connect with HTTP transport const app = express(); app.use(express.json());

app.post('/mcp', async (req, res) => { const transport = new StreamableHTTPServerTransport({ sessionIdGenerator: undefined, enableJsonResponse: true });

res.on('close', () => {

transport.close();

});

await server.connect(transport);

await transport.handleRequest(req, res, req.body);

});

const port = parseInt(process.env.PORT || '3000');

app.listen(port, () => {

console.log(MCP Server running on http://localhost:${port}/mcp);

});

Improving Network Efficiency with Notification Debouncing

When performing bulk updates that trigger notifications (e.g., enabling or disabling multiple tools in a loop), the SDK can send a large number of messages in a short period. To improve performance and reduce network traffic, you can enable notification debouncing.

This feature coalesces multiple, rapid calls for the same notification type into a single message. For example, if you disable five tools in a row, only one notifications/tools/list_changed message will be sent instead of five.

[!IMPORTANT] This feature is designed for "simple" notifications that do not carry unique data in their parameters. To prevent silent data loss, debouncing is automatically bypassed for any notification that contains a params object or a relatedRequestId. Such notifications will always be sent immediately.

This is an opt-in feature configured during server initialization.

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

const server = new McpServer( { name: "efficient-server", version: "1.0.0" }, { // Enable notification debouncing for specific methods debouncedNotificationMethods: [ 'notifications/tools/list_changed', 'notifications/resources/list_changed', 'notifications/prompts/list_changed' ] } );

// Now, any rapid changes to tools, resources, or prompts will result // in a single, consolidated notification for each type. server.registerTool("tool1", ...).disable(); server.registerTool("tool2", ...).disable(); server.registerTool("tool3", ...).disable(); // Only one 'notifications/tools/list_changed' is sent. Low-Level Server For more control, you can use the low-level Server class directly:

import { Server } from '@modelcontextprotocol/sdk/server/index.js'; import { StdioServerTransport } from '@modelcontextprotocol/sdk/server/stdio.js'; import { ListPromptsRequestSchema, GetPromptRequestSchema } from '@modelcontextprotocol/sdk/types.js';

const server = new Server( { name: 'example-server', version: '1.0.0' }, { capabilities: { prompts: {} } } );

server.setRequestHandler(ListPromptsRequestSchema, async () => { return { prompts: [ { name: 'example-prompt', description: 'An example prompt template', arguments: [ { name: 'arg1', description: 'Example argument', required: true } ] } ] }; });

server.setRequestHandler(GetPromptRequestSchema, async request => { if (request.params.name !== 'example-prompt') { throw new Error('Unknown prompt'); } return { description: 'Example prompt', messages: [ { role: 'user', content: { type: 'text', text: 'Example prompt text' } } ] }; });

const transport = new StdioServerTransport(); await server.connect(transport); Eliciting User Input MCP servers can request additional information from users through the elicitation feature. This is useful for interactive workflows where the server needs user input or confirmation:

// Server-side: Restaurant booking tool that asks for alternatives server.registerTool( 'book-restaurant', { title: 'Book Restaurant', description: 'Book a table at a restaurant', inputSchema: { restaurant: z.string(), date: z.string(), partySize: z.number() }, outputSchema: { success: z.boolean(), booking: z .object({ restaurant: z.string(), date: z.string(), partySize: z.number() }) .optional(), alternatives: z.array(z.string()).optional() } }, async ({ restaurant, date, partySize }) => { // Check availability const available = await checkAvailability(restaurant, date, partySize);

if (!available) {

// Ask user if they want to try alternative dates

const result = await server.server.elicitInput({

message: `No tables available at ${restaurant} on ${date}. Would you like to check alternative dates?`,

requestedSchema: {

type: 'object',

properties: {

checkAlternatives: {

type: 'boolean',

title: 'Check alternative dates',

description: 'Would you like me to check other dates?'

},

flexibleDates: {

type: 'string',

title: 'Date flexibility',

description: 'How flexible are your dates?',

enum: ['next_day', 'same_week', 'next_week'],

enumNames: ['Next day', 'Same week', 'Next week']

}

},

required: ['checkAlternatives']

}

});

if (result.action === 'accept' && result.content?.checkAlternatives) {

const alternatives = await findAlternatives(restaurant, date, partySize, result.content.flexibleDates as string);

const output = { success: false, alternatives };

return {

content: [

{

type: 'text',

text: JSON.stringify(output)

}

],

structuredContent: output

};

}

const output = { success: false };

return {

content: [

{

type: 'text',

text: JSON.stringify(output)

}

],

structuredContent: output

};

}

// Book the table

await makeBooking(restaurant, date, partySize);

const output = {

success: true,

booking: { restaurant, date, partySize }

};

return {

content: [

{

type: 'text',

text: JSON.stringify(output)

}

],

structuredContent: output

};

}

); Client-side: Handle elicitation requests

// This is a placeholder - implement based on your UI framework async function getInputFromUser( message: string, schema: any ): Promise<{ action: 'accept' | 'decline' | 'cancel'; data?: Record<string, any>; }> { // This should be implemented depending on the app throw new Error('getInputFromUser must be implemented for your platform'); }

client.setRequestHandler(ElicitRequestSchema, async request => { const userResponse = await getInputFromUser(request.params.message, request.params.requestedSchema);

return {

action: userResponse.action,

content: userResponse.action === 'accept' ? userResponse.data : undefined

};

}); Note: Elicitation requires client support. Clients must declare the elicitation capability during initialization.

Writing MCP Clients The SDK provides a high-level client interface:

import { Client } from '@modelcontextprotocol/sdk/client/index.js'; import { StdioClientTransport } from '@modelcontextprotocol/sdk/client/stdio.js';

const transport = new StdioClientTransport({ command: 'node', args: ['server.js'] });

const client = new Client({ name: 'example-client', version: '1.0.0' });

await client.connect(transport);

// List prompts const prompts = await client.listPrompts();

// Get a prompt const prompt = await client.getPrompt({ name: 'example-prompt', arguments: { arg1: 'value' } });

// List resources const resources = await client.listResources();

// Read a resource const resource = await client.readResource({ uri: 'file:///example.txt' });

// Call a tool const result = await client.callTool({ name: 'example-tool', arguments: { arg1: 'value' } }); Proxy Authorization Requests Upstream You can proxy OAuth requests to an external authorization provider:

import express from 'express'; import { ProxyOAuthServerProvider } from '@modelcontextprotocol/sdk/server/auth/providers/proxyProvider.js'; import { mcpAuthRouter } from '@modelcontextprotocol/sdk/server/auth/router.js';

const app = express();

const proxyProvider = new ProxyOAuthServerProvider({ endpoints: { authorizationUrl: 'https://auth.external.com/oauth2/v1/authorize', tokenUrl: 'https://auth.external.com/oauth2/v1/token', revocationUrl: 'https://auth.external.com/oauth2/v1/revoke' }, verifyAccessToken: async token => { return { token, clientId: '123', scopes: ['openid', 'email', 'profile'] }; }, getClient: async client_id => { return { client_id, redirect_uris: ['http://localhost:3000/callback'] }; } });

app.use( mcpAuthRouter({ provider: proxyProvider, issuerUrl: new URL('http://auth.external.com'), baseUrl: new URL('http://mcp.example.com'), serviceDocumentationUrl: new URL('https://docs.example.com/') }) ); This setup allows you to:

Forward OAuth requests to an external provider Add custom token validation logic Manage client registrations Provide custom documentation URLs Maintain control over the OAuth flow while delegating to an external provider Backwards Compatibility Clients and servers with StreamableHttp transport can maintain backwards compatibility with the deprecated HTTP+SSE transport (from protocol version 2024-11-05) as follows

Client-Side Compatibility For clients that need to work with both Streamable HTTP and older SSE servers:

import { Client } from '@modelcontextprotocol/sdk/client/index.js'; import { StreamableHTTPClientTransport } from '@modelcontextprotocol/sdk/client/streamableHttp.js'; import { SSEClientTransport } from '@modelcontextprotocol/sdk/client/sse.js'; let client: Client | undefined = undefined; const baseUrl = new URL(url); try { client = new Client({ name: 'streamable-http-client', version: '1.0.0' }); const transport = new StreamableHTTPClientTransport(new URL(baseUrl)); await client.connect(transport); console.log('Connected using Streamable HTTP transport'); } catch (error) { // If that fails with a 4xx error, try the older SSE transport console.log('Streamable HTTP connection failed, falling back to SSE transport'); client = new Client({ name: 'sse-client', version: '1.0.0' }); const sseTransport = new SSEClientTransport(baseUrl); await client.connect(sseTransport); console.log('Connected using SSE transport'); } Server-Side Compatibility For servers that need to support both Streamable HTTP and older clients:

import express from 'express'; import { McpServer } from '@modelcontextprotocol/sdk/server/mcp.js'; import { StreamableHTTPServerTransport } from '@modelcontextprotocol/sdk/server/streamableHttp.js'; import { SSEServerTransport } from '@modelcontextprotocol/sdk/server/sse.js';

const server = new McpServer({ name: 'backwards-compatible-server', version: '1.0.0' });

// ... set up server resources, tools, and prompts ...

const app = express(); app.use(express.json());

// Store transports for each session type const transports = { streamable: {} as Record<string, StreamableHTTPServerTransport>, sse: {} as Record<string, SSEServerTransport> };

// Modern Streamable HTTP endpoint app.all('/mcp', async (req, res) => { // Handle Streamable HTTP transport for modern clients // Implementation as shown in the "With Session Management" example // ... });

// Legacy SSE endpoint for older clients app.get('/sse', async (req, res) => { // Create SSE transport for legacy clients const transport = new SSEServerTransport('/messages', res); transports.sse[transport.sessionId] = transport;

res.on('close', () => {

delete transports.sse[transport.sessionId];

});

await server.connect(transport);

});

// Legacy message endpoint for older clients app.post('/messages', async (req, res) => { const sessionId = req.query.sessionId as string; const transport = transports.sse[sessionId]; if (transport) { await transport.handlePostMessage(req, res, req.body); } else { res.status(400).send('No transport found for sessionId'); } });

app.listen(3000); Note: The SSE transport is now deprecated in favor of Streamable HTTP. New implementations should use Streamable HTTP, and existing SSE implementations should plan to migrate.

Documentation Model Context Protocol documentation MCP Specification Example Servers Contributing Issues and pull requests are welcome on GitHub at https://github.com/modelcontextprotocol/typescript-sdk.

License This project is licensed under the MIT License—see the LICENSE file for details.

Monica Monica Repo Summary Supports the most advanced models to help you quickly understand the contents of the repo About The official TypeScript SDK for Model Context Protocol servers and clients

modelcontextprotocol.io Resources Readme License MIT license Code of conduct Code of conduct Contributing Contributing Security policy Security policy Activity Custom properties Stars 10.2k stars Watchers 84 watching Forks 1.3k forks Report repository Releases 59 1.19.0 Latest last week

- 58 releases Contributors 116 @jspahrsummers @ihrpr @ochafik @bhosmer-ant @jerome3o-anthropic @cliffhall @allenzhou101 @claude @pcarleton @dsp-ant @KKonstantinov @ddworken @felixweinberger @Achintha444

- 102 contributors Deployments 61 release last week

- 60 deployments Languages TypeScript 98.7%

Ja

The real power of MCP emerges when multiple servers work together, combining their specialized capabilities through a unified interface.

Consider a personalized AI travel planner application, with three connected servers:

- Travel Server - Handles flights, hotels, and itineraries

- Weather Server - Provides climate data and forecasts

- Calendar/Email Server - Manages schedules and communications

-

User invokes a prompt with parameters:

{ "prompt": "plan-vacation", "arguments": { "destination": "Barcelona", "departure_date": "2024-06-15", "return_date": "2024-06-22", "budget": 3000, "travelers": 2 } } -

User selects resources to include:

calendar://my-calendar/June-2024(from Calendar Server)travel://preferences/europe(from Travel Server)travel://past-trips/Spain-2023(from Travel Server)

-

AI processes the request using tools:

The AI first reads all selected resources to gather context - identifying available dates from the calendar, learning preferred airlines and hotel types from travel preferences, and discovering previously enjoyed locations from past trips.

Using this context, the AI then executes a series of Tools:

searchFlights()- Queries airlines for NYC to Barcelona flightscheckWeather()- Retrieves climate forecasts for travel dates

The AI then uses this information to create the booking and following steps, requesting approval from the user where necessary:

bookHotel()- Finds hotels within the specified budgetcreateCalendarEvent()- Adds the trip to the user's calendarsendEmail()- Sends confirmation with trip details

The result: Through multiple MCP servers, the user researched and booked a Barcelona trip tailored to their schedule. The "Plan a Vacation" prompt guided the AI to combine Resources (calendar availability and travel history) with Tools (searching flights, booking hotels, updating calendars) across different servers—gathering context and executing the booking. A task that could've taken hours was completed in minutes using MCP.

This overview of the Model Context Protocol (MCP) discusses its scope and core concepts, and provides an example demonstrating each core concept.

Because MCP SDKs abstract away many concerns, most developers will likely find the data layer protocol section to be the most useful. It discusses how MCP servers can provide context to an AI application.

For specific implementation details, please refer to the documentation for your language-specific SDK.

The Model Context Protocol includes the following projects:

- MCP Specification: A specification of MCP that outlines the implementation requirements for clients and servers.

- MCP SDKs: SDKs for different programming languages that implement MCP.

- MCP Development Tools: Tools for developing MCP servers and clients, including the MCP Inspector

- MCP Reference Server Implementations: Reference implementations of MCP servers.

MCP follows a client-server architecture where an MCP host — an AI application like Claude Code or Claude Desktop — establishes connections to one or more MCP servers. The MCP host accomplishes this by creating one MCP client for each MCP server. Each MCP client maintains a dedicated one-to-one connection with its corresponding MCP server.

The key participants in the MCP architecture are:

- MCP Host: The AI application that coordinates and manages one or multiple MCP clients

- MCP Client: A component that maintains a connection to an MCP server and obtains context from an MCP server for the MCP host to use

- MCP Server: A program that provides context to MCP clients

For example: Visual Studio Code acts as an MCP host. When Visual Studio Code establishes a connection to an MCP server, such as the Sentry MCP server, the Visual Studio Code runtime instantiates an MCP client object that maintains the connection to the Sentry MCP server. When Visual Studio Code subsequently connects to another MCP server, such as the local filesystem server, the Visual Studio Code runtime instantiates an additional MCP client object to maintain this connection, hence maintaining a one-to-one relationship of MCP clients to MCP servers.

graph TB

subgraph "MCP Host (AI Application)"

Client1["MCP Client 1"]

Client2["MCP Client 2"]

Client3["MCP Client 3"]

end

Server1["MCP Server 1<br/>(e.g., Sentry)"]

Server2["MCP Server 2<br/>(e.g., Filesystem)"]

Server3["MCP Server 3<br/>(e.g., Database)"]

Client1 ---|"One-to-one<br/>connection"| Server1

Client2 ---|"One-to-one<br/>connection"| Server2

Client3 ---|"One-to-one<br/>connection"| Server3

style Client1 fill:#e1f5fe

style Client2 fill:#e1f5fe

style Client3 fill:#e1f5fe

style Server1 fill:#f3e5f5

style Server2 fill:#f3e5f5

style Server3 fill:#f3e5f5

Note that MCP server refers to the program that serves context data, regardless of where it runs. MCP servers can execute locally or remotely. For example, when Claude Desktop launches the filesystem server, the server runs locally on the same machine because it uses the STDIO transport. This is commonly referred to as a "local" MCP server. The official Sentry MCP server runs on the Sentry platform, and uses the Streamable HTTP transport. This is commonly referred to as a "remote" MCP server.

MCP consists of two layers:

- Data layer: Defines the JSON-RPC based protocol for client-server communication, including lifecycle management, and core primitives, such as tools, resources, prompts and notifications.

- Transport layer: Defines the communication mechanisms and channels that enable data exchange between clients and servers, including transport-specific connection establishment, message framing, and authorization.

Conceptually the data layer is the inner layer, while the transport layer is the outer layer.

The data layer implements a JSON-RPC 2.0 based exchange protocol that defines the message structure and semantics. This layer includes:

- Lifecycle management: Handles connection initialization, capability negotiation, and connection termination between clients and servers

- Server features: Enables servers to provide core functionality including tools for AI actions, resources for context data, and prompts for interaction templates from and to the client

- Client features: Enables servers to ask the client to sample from the host LLM, elicit input from the user, and log messages to the client

- Utility features: Supports additional capabilities like notifications for real-time updates and progress tracking for long-running operations

The transport layer manages communication channels and authentication between clients and servers. It handles connection establishment, message framing, and secure communication between MCP participants.

MCP supports two transport mechanisms:

- Stdio transport: Uses standard input/output streams for direct process communication between local processes on the same machine, providing optimal performance with no network overhead.

- Streamable HTTP transport: Uses HTTP POST for client-to-server messages with optional Server-Sent Events for streaming capabilities. This transport enables remote server communication and supports standard HTTP authentication methods including bearer tokens, API keys, and custom headers. MCP recommends using OAuth to obtain authentication tokens.

The transport layer abstracts communication details from the protocol layer, enabling the same JSON-RPC 2.0 message format across all transport mechanisms.

A core part of MCP is defining the schema and semantics between MCP clients and MCP servers. Developers will likely find the data layer — in particular, the set of primitives — to be the most interesting part of MCP. It is the part of MCP that defines the ways developers can share context from MCP servers to MCP clients.

MCP uses JSON-RPC 2.0 as its underlying RPC protocol. Client and servers send requests to each other and respond accordingly. Notifications can be used when no response is required.

MCP is a stateful protocol that requires lifecycle management. The purpose of lifecycle management is to negotiate the capabilities that both client and server support. Detailed information can be found in the specification, and the example showcases the initialization sequence.

MCP primitives are the most important concept within MCP. They define what clients and servers can offer each other. These primitives specify the types of contextual information that can be shared with AI applications and the range of actions that can be performed.

MCP defines three core primitives that servers can expose:

- Tools: Executable functions that AI applications can invoke to perform actions (e.g., file operations, API calls, database queries)

- Resources: Data sources that provide contextual information to AI applications (e.g., file contents, database records, API responses)

- Prompts: Reusable templates that help structure interactions with language models (e.g., system prompts, few-shot examples)

Each primitive type has associated methods for discovery (*/list), retrieval (*/get), and in some cases, execution (tools/call).

MCP clients will use the */list methods to discover available primitives. For example, a client can first list all available tools (tools/list) and then execute them. This design allows listings to be dynamic.

As a concrete example, consider an MCP server that provides context about a database. It can expose tools for querying the database, a resource that contains the schema of the database, and a prompt that includes few-shot examples for interacting with the tools.

For more details about server primitives see server concepts.

MCP also defines primitives that clients can expose. These primitives allow MCP server authors to build richer interactions.

- Sampling: Allows servers to request language model completions from the client's AI application. This is useful when servers' authors want access to a language model, but want to stay model independent and not include a language model SDK in their MCP server. They can use the

sampling/completemethod to request a language model completion from the client's AI application. - Elicitation: Allows servers to request additional information from users. This is useful when servers' authors want to get more information from the user, or ask for confirmation of an action. They can use the

elicitation/requestmethod to request additional information from the user. - Logging: Enables servers to send log messages to clients for debugging and monitoring purposes.

For more details about client primitives see client concepts.

The protocol supports real-time notifications to enable dynamic updates between servers and clients. For example, when a server's available tools change—such as when new functionality becomes available or existing tools are modified—the server can send tool update notifications to inform connected clients about these changes. Notifications are sent as JSON-RPC 2.0 notification messages (without expecting a response) and enable MCP servers to provide real-time updates to connected clients.

This section provides a step-by-step walkthrough of an MCP client-server interaction, focusing on the data layer protocol. We'll demonstrate the lifecycle sequence, tool operations, and notifications using JSON-RPC 2.0 messages.

MCP begins with lifecycle management through a capability negotiation handshake. As described in the [lifecycle management](#lifecycle-management) section, the client sends an `initialize` request to establish the connection and negotiate supported features.<CodeGroup>

```json Initialize Request theme={null}

{

"jsonrpc": "2.0",

"id": 1,

"method": "initialize",

"params": {

"protocolVersion": "2025-06-18",

"capabilities": {

"elicitation": {}

},

"clientInfo": {

"name": "example-client",

"version": "1.0.0"

}

}

}

```

```json Initialize Response theme={null}

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"protocolVersion": "2025-06-18",

"capabilities": {

"tools": {

"listChanged": true

},

"resources": {}

},

"serverInfo": {

"name": "example-server",

"version": "1.0.0"

}

}

}

```

</CodeGroup>

#### Understanding the Initialization Exchange

The initialization process is a key part of MCP's lifecycle management and serves several critical purposes:

1. **Protocol Version Negotiation**: The `protocolVersion` field (e.g., "2025-06-18") ensures both client and server are using compatible protocol versions. This prevents communication errors that could occur when different versions attempt to interact. If a mutually compatible version is not negotiated, the connection should be terminated.

2. **Capability Discovery**: The `capabilities` object allows each party to declare what features they support, including which [primitives](#primitives) they can handle (tools, resources, prompts) and whether they support features like [notifications](#notifications). This enables efficient communication by avoiding unsupported operations.

3. **Identity Exchange**: The `clientInfo` and `serverInfo` objects provide identification and versioning information for debugging and compatibility purposes.

In this example, the capability negotiation demonstrates how MCP primitives are declared:

**Client Capabilities**:

* `"elicitation": {}` - The client declares it can work with user interaction requests (can receive `elicitation/create` method calls)

**Server Capabilities**:

* `"tools": {"listChanged": true}` - The server supports the tools primitive AND can send `tools/list_changed` notifications when its tool list changes

* `"resources": {}` - The server also supports the resources primitive (can handle `resources/list` and `resources/read` methods)

After successful initialization, the client sends a notification to indicate it's ready:

```json Notification theme={null}

{

"jsonrpc": "2.0",

"method": "notifications/initialized"

}

```

#### How This Works in AI Applications

During initialization, the AI application's MCP client manager establishes connections to configured servers and stores their capabilities for later use. The application uses this information to determine which servers can provide specific types of functionality (tools, resources, prompts) and whether they support real-time updates.

```python Pseudo-code for AI application initialization theme={null}

# Pseudo Code

async with stdio_client(server_config) as (read, write):

async with ClientSession(read, write) as session:

init_response = await session.initialize()

if init_response.capabilities.tools:

app.register_mcp_server(session, supports_tools=True)

app.set_server_ready(session)

```

<CodeGroup>

```json Tools List Request theme={null}

{

"jsonrpc": "2.0",

"id": 2,

"method": "tools/list"

}

```

```json Tools List Response theme={null}

{

"jsonrpc": "2.0",

"id": 2,

"result": {

"tools": [

{

"name": "calculator_arithmetic",

"title": "Calculator",

"description": "Perform mathematical calculations including basic arithmetic, trigonometric functions, and algebraic operations",

"inputSchema": {

"type": "object",

"properties": {

"expression": {

"type": "string",

"description": "Mathematical expression to evaluate (e.g., '2 + 3 * 4', 'sin(30)', 'sqrt(16)')"

}

},

"required": ["expression"]

}

},

{

"name": "weather_current",

"title": "Weather Information",

"description": "Get current weather information for any location worldwide",

"inputSchema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City name, address, or coordinates (latitude,longitude)"

},

"units": {

"type": "string",

"enum": ["metric", "imperial", "kelvin"],

"description": "Temperature units to use in response",

"default": "metric"

}

},

"required": ["location"]

}

}

]

}

}

```

</CodeGroup>

#### Understanding the Tool Discovery Request

The `tools/list` request is simple, containing no parameters.

#### Understanding the Tool Discovery Response

The response contains a `tools` array that provides comprehensive metadata about each available tool. This array-based structure allows servers to expose multiple tools simultaneously while maintaining clear boundaries between different functionalities.

Each tool object in the response includes several key fields:

* **`name`**: A unique identifier for the tool within the server's namespace. This serves as the primary key for tool execution and should follow a clear naming pattern (e.g., `calculator_arithmetic` rather than just `calculate`)

* **`title`**: A human-readable display name for the tool that clients can show to users

* **`description`**: Detailed explanation of what the tool does and when to use it

* **`inputSchema`**: A JSON Schema that defines the expected input parameters, enabling type validation and providing clear documentation about required and optional parameters

#### How This Works in AI Applications

The AI application fetches available tools from all connected MCP servers and combines them into a unified tool registry that the language model can access. This allows the LLM to understand what actions it can perform and automatically generates the appropriate tool calls during conversations.

```python Pseudo-code for AI application tool discovery theme={null}

# Pseudo-code using MCP Python SDK patterns

available_tools = []

for session in app.mcp_server_sessions():

tools_response = await session.list_tools()

available_tools.extend(tools_response.tools)

conversation.register_available_tools(available_tools)

```

#### Understanding the Tool Execution Request

The `tools/call` request follows a structured format that ensures type safety and clear communication between client and server. Note that we're using the proper tool name from the discovery response (`weather_current`) rather than a simplified name:

<CodeGroup>

```json Tool Call Request theme={null}

{

"jsonrpc": "2.0",

"id": 3,

"method": "tools/call",

"params": {

"name": "weather_current",

"arguments": {

"location": "San Francisco",

"units": "imperial"

}

}

}

```

```json Tool Call Response theme={null}

{

"jsonrpc": "2.0",

"id": 3,

"result": {

"content": [

{

"type": "text",

"text": "Current weather in San Francisco: 68°F, partly cloudy with light winds from the west at 8 mph. Humidity: 65%"

}

]

}

}

```

</CodeGroup>

#### Key Elements of Tool Execution

The request structure includes several important components:

1. **`name`**: Must match exactly the tool name from the discovery response (`weather_current`). This ensures the server can correctly identify which tool to execute.

2. **`arguments`**: Contains the input parameters as defined by the tool's `inputSchema`. In this example:

* `location`: "San Francisco" (required parameter)

* `units`: "imperial" (optional parameter, defaults to "metric" if not specified)

3. **JSON-RPC Structure**: Uses standard JSON-RPC 2.0 format with unique `id` for request-response correlation.

#### Understanding the Tool Execution Response

The response demonstrates MCP's flexible content system:

1. **`content` Array**: Tool responses return an array of content objects, allowing for rich, multi-format responses (text, images, resources, etc.)

2. **Content Types**: Each content object has a `type` field. In this example, `"type": "text"` indicates plain text content, but MCP supports various content types for different use cases.

3. **Structured Output**: The response provides actionable information that the AI application can use as context for language model interactions.

This execution pattern allows AI applications to dynamically invoke server functionality and receive structured responses that can be integrated into conversations with language models.

#### How This Works in AI Applications

When the language model decides to use a tool during a conversation, the AI application intercepts the tool call, routes it to the appropriate MCP server, executes it, and returns the results back to the LLM as part of the conversation flow. This enables the LLM to access real-time data and perform actions in the external world.

```python theme={null}

# Pseudo-code for AI application tool execution

async def handle_tool_call(conversation, tool_name, arguments):

session = app.find_mcp_session_for_tool(tool_name)

result = await session.call_tool(tool_name, arguments)

conversation.add_tool_result(result.content)

```

#### Understanding Tool List Change Notifications

When the server's available tools change—such as when new functionality becomes available, existing tools are modified, or tools become temporarily unavailable—the server can proactively notify connected clients:

```json Request theme={null}

{

"jsonrpc": "2.0",

"method": "notifications/tools/list_changed"

}

```

#### Key Features of MCP Notifications

1. **No Response Required**: Notice there's no `id` field in the notification. This follows JSON-RPC 2.0 notification semantics where no response is expected or sent.

2. **Capability-Based**: This notification is only sent by servers that declared `"listChanged": true` in their tools capability during initialization (as shown in Step 1).

3. **Event-Driven**: The server decides when to send notifications based on internal state changes, making MCP connections dynamic and responsive.

#### Client Response to Notifications

Upon receiving this notification, the client typically reacts by requesting the updated tool list. This creates a refresh cycle that keeps the client's understanding of available tools current:

```json Request theme={null}

{

"jsonrpc": "2.0",

"id": 4,

"method": "tools/list"

}

```

#### Why Notifications Matter

This notification system is crucial for several reasons:

1. **Dynamic Environments**: Tools may come and go based on server state, external dependencies, or user permissions

2. **Efficiency**: Clients don't need to poll for changes; they're notified when updates occur

3. **Consistency**: Ensures clients always have accurate information about available server capabilities

4. **Real-time Collaboration**: Enables responsive AI applications that can adapt to changing contexts

This notification pattern extends beyond tools to other MCP primitives, enabling comprehensive real-time synchronization between clients and servers.

#### How This Works in AI Applications

When the AI application receives a notification about changed tools, it immediately refreshes its tool registry and updates the LLM's available capabilities. This ensures that ongoing conversations always have access to the most current set of tools, and the LLM can dynamically adapt to new functionality as it becomes available.

```python theme={null}

# Pseudo-code for AI application notification handling

async def handle_tools_changed_notification(session):

tools_response = await session.list_tools()

app.update_available_tools(session, tools_response.tools)

if app.conversation.is_active():

app.conversation.notify_llm_of_new_capabilities()

```

MCP servers are programs that expose specific capabilities to AI applications through standardized protocol interfaces.

Common examples include file system servers for document access, database servers for data queries, GitHub servers for code management, Slack servers for team communication, and calendar servers for scheduling.

Servers provide functionality through three building blocks:

| Feature | Explanation | Examples | Who controls it |

|---|---|---|---|

| Tools | Functions that your LLM can actively call, and decides when to use them based on user requests. Tools can write to databases, call external APIs, modify files, or trigger other logic. | Search flights Send messages Create calendar events |

Model |

| Resources | Passive data sources that provide read-only access to information for context, such as file contents, database schemas, or API documentation. | Retrieve documents Access knowledge bases Read calendars |

Application |

| Prompts | Pre-built instruction templates that tell the model to work with specific tools and resources. | Plan a vacation Summarize my meetings Draft an email |

User |

We will use a hypothetical scenario to demonstrate the role of each of these features, and show how they can work together.

Tools enable AI models to perform actions. Each tool defines a specific operation with typed inputs and outputs. The model requests tool execution based on context.

Tools are schema-defined interfaces that LLMs can invoke. MCP uses JSON Schema for validation. Each tool performs a single operation with clearly defined inputs and outputs. Tools may require user consent prior to execution, helping to ensure users maintain control over actions taken by a model.

Protocol operations:

| Method | Purpose | Returns |

|---|---|---|

tools/list |

Discover available tools | Array of tool definitions with schemas |

tools/call |

Execute a specific tool | Tool execution result |

Example tool definition:

{

name: "searchFlights",

description: "Search for available flights",

inputSchema: {

type: "object",

properties: {

origin: { type: "string", description: "Departure city" },

destination: { type: "string", description: "Arrival city" },

date: { type: "string", format: "date", description: "Travel date" }

},

required: ["origin", "destination", "date"]

}

}Tools enable AI applications to perform actions on behalf of users. In a travel planning scenario, the AI application might use several tools to help book a vacation:

Flight Search

searchFlights(origin: "NYC", destination: "Barcelona", date: "2024-06-15")

Queries multiple airlines and returns structured flight options.

Calendar Blocking

createCalendarEvent(title: "Barcelona Trip", startDate: "2024-06-15", endDate: "2024-06-22")

Marks the travel dates in the user's calendar.

Email notification

sendEmail(to: "team@work.com", subject: "Out of Office", body: "...")

Sends an automated out-of-office message to colleagues.