This is a full event driven batch pipeline on AWS , deployed using Terraform.

The architecture for this is shown below.

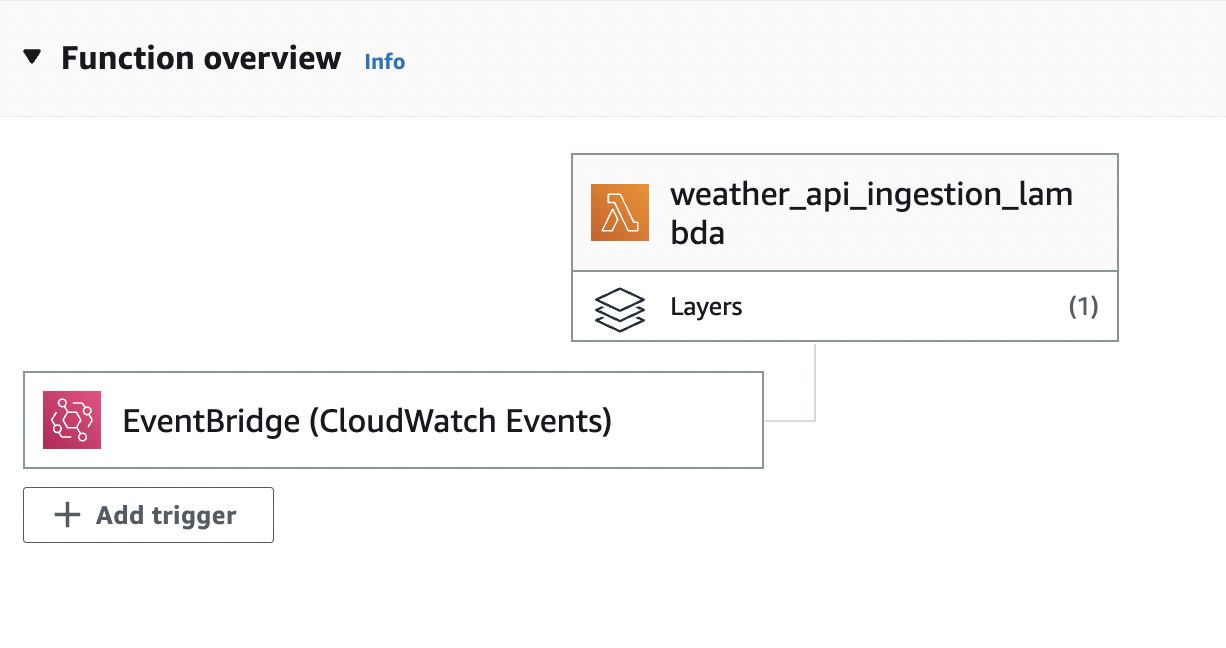

This project makes use of three lambda functions. The first lambda function(ingestion_lambda.py) extracts the weather information from an API every hour, scheduled using AWS cloud watch events. The architecture flow diagram for this is shown below.

These events are then stored in a raw s3 bucket in JSON format. Transformations are done on this raw S3 bucket and the results are sent out to another S3 bucket in CSV format.

The second lambda function (upload_lambda.py) receives events from the transformed bucket , processes it and sends the results to a DynamoDb table and an SNS topic.

The diagram for this is shown below

An SQS queue is subscribed to the SNS topic and a third lambda function aggregates the messages of this on a daily basis.

A redshift cluster is also queried on the Dynamodb table for visualization on AWS Quicksight.

The visualization is shown below.

The resources can be fired up by running the command terraform plan and terraform apply