-

Notifications

You must be signed in to change notification settings - Fork 3

FAQ

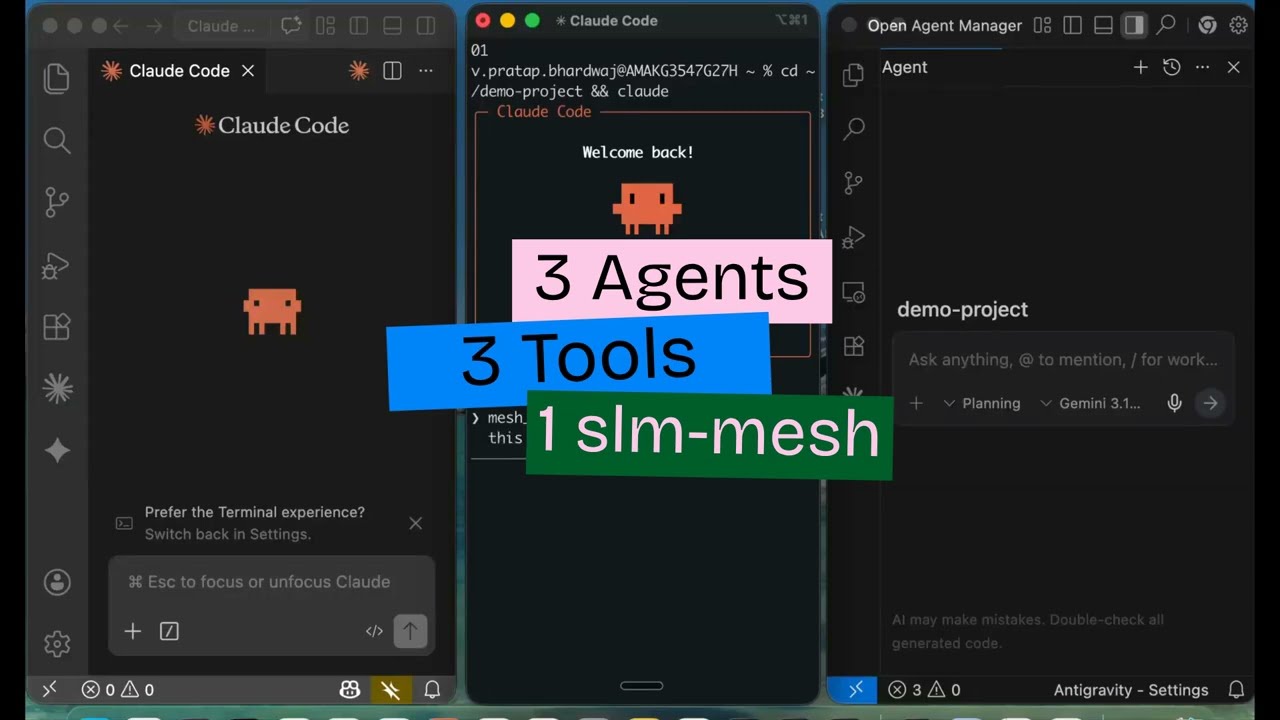

SLM Mesh is an open-source MCP server that gives AI coding agents the ability to discover each other, send messages, share state, lock files, and subscribe to events — all on localhost.

SuperLocalMemory. SLM Mesh is the communication layer of the Qualixar ecosystem.

Yes. Elastic License 2.0 — free to use, modify, and distribute. The only restriction is you can't offer it as a hosted/managed service.

No. Everything runs on localhost. No telemetry, no analytics, no external connections.

Any MCP-compatible agent: Claude Code, Cursor, Aider, Windsurf, Codex, VS Code (GitHub Copilot), Antigravity, and more.

Yes. Claude can talk to Gemini, which can talk to GPT. SLM Mesh is agent-agnostic — it doesn't care which model powers the agent.

Currently tested on macOS. Linux support is expected to work (Unix Domain Sockets are supported). Windows support is planned.

No. SLM Mesh runs as a standard MCP server via stdio. No dangerous flags required.

First session auto-starts a broker on localhost:7899. Each MCP server registers with the broker. Real-time push via Unix Domain Sockets. When the last session closes, the broker auto-shuts down.

SQLite with WAL mode. The database is at ~/.slm-mesh/mesh.db.

Yes. Messages and state persist across session restarts (auto-pruned after 24/48 hours).

Bearer token authentication on all endpoints. Token regenerated per broker session. Localhost-only binding. See Security for full details.

Run slm-mesh clean to kill zombie processes, then try again.

Check slm-mesh status to verify the broker is running and peers are active. Check slm-mesh peers to see if sessions are registered.

Locks auto-expire after 10 minutes (default). You can also restart the broker: slm-mesh stop && slm-mesh start.